I have now calibrated and rejected bad data, so the original 1064 image files have been reduced to 96 LRGB light frames. In this post I will reduce this further to just four - one for each filter. I use MaxIm for stacking with the 'Auto - Star Matching' alignment method. I have previously found that bi-linear interpolation produces slightly sharper - but also more noisy - images than bi-cubic interpolation. On the RGB data I'll go for bi-cubic since resolution does not matter so much here. On the luminance data let's see what can be gained by bi-linear/bi-cubic and all/best half stacking:

FWHM of stacked luminance frames:

Bi-cubic: Bi-linear:

All frames: 3.53" 3.45"

Best half: 3.41" 3.31"

By 'best half' I mean using only the sharpest 50% as defined by the image FWHM value. The FWHM can be reduced ~6% by using bi-linear and 'best half' compared to using all images and bi-cubic interpolation. However, the SNR of faint regions of M100 is cut in half by doing so and I think this is too high a price to pay. Instead, I’d rather have high SNR and then later try my luck with deconvolution which really demands low noise data. See for yourself below - there isn't much difference visible between the two results!

|

| Enlarged sections of stacked luminance frames. There is no significant difference visible, but the computer tells me that SNR is better on the bi-cubic/all image. |

For aligning images from various filters I use a common reference image from the luminance stack. This reference is of course not included in the stacking process, but it ensures that the resulting, stacked LRGB images are aligned to each other.

Next step will be to experiment with deconvolution on the luminance image, so stay tuned!

EXTRA NOTE ABOUT IMAGE COMBINING IN MAXIM:

I should mention a problem with MaxIm I encountered while combining the luminance data. I have two sets of sub-exposures - one from March 25th and another from March 27th. By mistake I used a different guide star on these two nights and as a consequence the two image series are pretty severely misaligned:

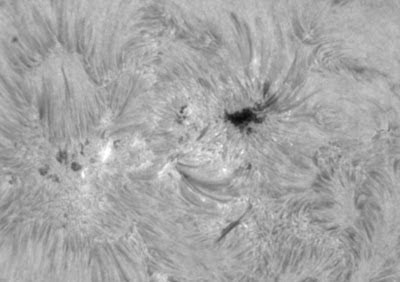

|

| Two sub-exposures that are severely misaligned. |

Still, the data from both nights is good so I'll go ahead with alignment and combining. Note that the two nights produced a different background level - one the first I had ADU=1700 while on the second I got ADU=2300. In MaxIm I use the 'Auto - star matching' alignment mode which works very well, then combine using 'Sigma-clip' combine method and 'delta-level' normalization. The result is shown below.

|

| Problem: combined image has a large offset where the sub-exposures fail to overlap. |

I spent several days pondering this problem without success. Only when writing this blog post did the correct line of thought come into place - and with that, a solution! I think the problem arises in two steps. First, during alignment, MaxIm sets pixel values outside the original field of view to zero (other programs often chose to use a median edge value). On my images this will result on a lot of zeroes, due to the large misalignment between the two nights. Next, during image combination, this zero-value creates an offset on the combined result, as shown above. The solution is very simple:

just activate the 'Ignore black pixels' option on the 'Combine' tab. As shown below this fixes the problem. Of course the background still exhibits a discontinuity in noise level where the two dataseries fail to overlap but this is quite natural and easy to handle later on.

|

| Problem solved: use 'ignore black pixels' option! |